The Authenticity Trap: Against the AI Slop Panic

Creativity, authorship, and the internet’s hottest moral panic.

Someone posts an essay. A video essay. A piece of artwork. A Stooop Substack Within minutes, the reply arrives beneath it: “AI slop.”

No elaboration. No engagement with the argument. No discussion of the idea itself. Just the allegations—confident, dismissive, final.

Sometimes the accusation is correct. Entire industries have emerged around automated content generation: articles written in seconds, images mass-produced for engagement, endless scrolls of algorithmic filler designed to keep feeds moving.

The internet is, undeniably, flooded with machine-generated content. But something stranger has started happening alongside that flood. Increasingly, people dismiss work not because it is shallow or uninteresting, but because a tool might have been involved in its creation. An essay outlined with a language model becomes suspect. A piece edited with AI assistance becomes suspect. A student who uses generative tools to refine their thinking becomes suspect. In many cases, the conversation never reaches the work itself the debate stops at the tool.

The phrase “AI slop” has become more than a critique of bad content. It has become a cultural reflex—a shorthand for a deeper anxiety about authorship, labor, and the changing relationship between humans and the tools they use to think. The early wave of automated media absolutely deserved criticism. Vast quantities of shallow, machine-generated material now circulate online, produced primarily to capture clicks and engagement. But the blanket rejection of anything touched by generative tools risks collapsing several very different creative practices into a single accusation.

And in that collapse, something important may be getting lost.

The Collapse of Categories

Today the phrase AI slop is used to describe three very different things. The “AI slop” narrative effectively collapses these distinct modes of engagement, ignoring the fundamental shifts happening in the creative process.

Fully automated production

Entire essays, images, or videos generated and posted with minimal human involvement. The human role is limited to prompting and publishing. The spam farms and content mills people are rightly reacting to. The sludge that exists only to occupy space and capture engagement.

AI as productivity tool

A person writes, thinks, and structures their work, but delegates certain mechanical tasks to the machine. Grammar correction. Summarization. Drafting routine prose. The cognitive labor remains human; the tool handles execution.

This is where many people first encounter generative AI. I use Grammarly to make sure I don’t look and sound crazy. I ask my own local LLM to rephrase a tangled sentence or suggest a clearer transition. These are low-stakes uses, but they quietly change expectations. Someone who becomes comfortable using AI for editing may eventually try it for brainstorming. Someone who uses it for summarization might wonder what it could do with a half-finished poem. The productivity use case is not separate from creative use. It is often the on-ramp.

AI as creative partner

Here the relationship shifts. The human enters a genuine creative dialogue with the machine. They might feed the system their own rough prose and ask for metaphorical variations. They might use generated material as raw material for collage or remix. They might prompt the system into unexpected territory, then rework those surprises into something personal.

The direction of flow changes. In productivity use, it is:

human → tool → output

In creative partnership, it becomes:

human ↔ tool → something neither would have produced alone

The machine becomes a catalyst for thinking differently—not a replacement for thinking at all.

These categories blur at the edges. But the internet treats all three as the same. Fully automated spam, Grammarly-polished prose, and deliberate creative collaboration all receive the same label: AI slop.

They are not the same. The impulse to lump these together ignores that AI doesn’t automatically equate to “slop”; it’s the human intention behind its deployment that determines value. Calling everything “AI slop” is like calling photography, Photoshop, and stock photo spam the same phenomenon simply because they involve cameras.

Low Effort Is Not New

A common criticism of AI-assisted work is that it lowers the effort required to produce something. But low effort has always existed in culture.

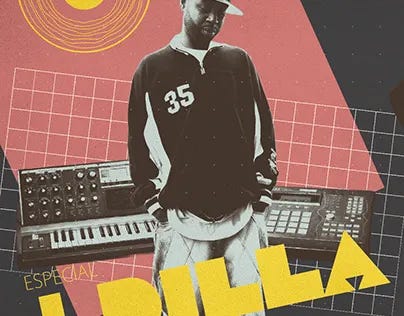

Memes, reaction GIFs, quick sketches, jokes, improvisations, mashups—many creative forms begin as low-effort gestures. Yet entire art forms have grown out of those gestures. Consider the history of sampling in hip-hop.

Early critics dismissed sampling as theft or laziness—producers were “just borrowing” someone else’s music instead of creating their own. But artists like DJ Kool Herc, Grandmaster Flash, and later J Dilla didn’t simply re-use snippets; they reimagined them, creating a grammar of sound that transformed popular music. Two records played together became a conversation across decades; fragments of old music became raw material for something entirely new. What critics called low effort turned out to be a new language of creativity.

If low effort isn’t new, why does AI provoke such intense backlash?

Because AI is not just a creative tool. It sits inside a larger technological system.

Large language models and generative systems are developed by massive corporations such as OpenAI, Google, and Microsoft. Their development involves enormous computational resources, vast data extraction, and significant energy consumption.

The anxiety fueling the “AI slop” rejection isn’t solely about the tool itself. It taps into genuine concerns—the environmental cost of AI training, the concentration of technological power in the hands of a few corporations, the flood of automated content, and the potential displacement of human labor. These concerns are legitimate.

Blaming AI alone risks placing the burden on the most vulnerable—artists and students—rather than on the corporations and institutions actually shaping these systems. To demand that individual artists solve these structural problems by forgoing a tool is to place the burden of corporate and governmental inaction on the most vulnerable and experimental members of the creative class. The solution to concentrated power is not cultural Luddism, but political and regulatory action.

Yet online discourse often collapses these distinctions. The artist becomes the proxy target for anxieties about the entire industry—a form of cultural deflection that risks silencing the critiques of the systems generating the very problems people are reacting to.

The Authenticity Trap

Tying back to my previous essay, we are all becoming “Blade Runners.” Instead of asking:

Is this work interesting? Is the argument compelling? Is the idea worth thinking about?

Audiences increasingly ask a different question:

Was AI involved?

People begin performing a kind of cultural forensics—what I call “Blade Running”—searching for linguistic patterns or stylistic markers that might reveal the presence of a machine. Like the Voight-Kampff test in Blade Runner, the goal is no longer interpretation but detection: proving whether something is human.

This relentless focus on detecting AI—this cultural forensics—represents a misdirection. The real question is not how the work was made, but whether the work itself resonates. It’s a shift from genuine critique to performative judgment, potentially chilling experimentation and rewarding cautious, almost paranoid, creative practices.

Ironically, this obsession with detecting AI can discourage exactly the kind of thoughtful work people claim to value. When every idea risks dismissal as “AI slop,” creators become cautious, defensive, or silent. The discourse begins to punish experimentation rather than reward it.

A deeper discomfort may come from something more profound. For most of history, tools extended human physical ability. A hammer extends the arm. A camera extends the eye. A microphone, the voice.

But systems like ChatGPT, and DeepSeek extend something different: they extend cognitive labor—assisting with language, structuring ideas, and synthesizing information.

When tools begin participating in intellectual processes, the boundary between instrument and collaborator becomes blurry. This raises uncomfortable questions about authorship and the evolving role of the human mind in creative processes. There’s a fundamental shift, not simply a replacement, and a cultural acceptance of this new reality is still developing.

Is the human still the author? Is the machine part of the authorship? Does assistance invalidate creativity, or simply transform it? These questions do not yet have stable answers. ChatGPT itself insists that the ideas remain our own, but it is AI and can make mistakes (wink).

The Environmental and Social Cost

There is also a material dimension that cannot be ignored.

Training and operating large AI systems requires significant computational power and energy consumption. Data centers supporting generative models operate at scales comparable to major industrial infrastructure. Critics argue that this environmental cost must be weighed against the value the technology produces. But that same question could be asked of many modern technologies. Video streaming, cryptocurrency mining, and large-scale gaming hardware all consume enormous amounts of energy. Society tolerates these costs because the activities they enable are culturally accepted. AI disrupts a different domain: intellectual labor.

When machines participate in thinking processes, people begin questioning whether the output justifies the cost.

The internet is flooded with automated content. Corporations are aggressively integrating AI into everyday software. Educational institutions are struggling to redefine what learning means in an AI-assisted world.

In response, culture has developed a blunt defense mechanism: reject anything associated with AI. But blunt mechanisms rarely last.

The Question We Should Be Asking

Ultimately, the “AI slop” panic isn’t about rejecting bad content; it’s about resisting a fundamental shift in the creative landscape.

The real question is not whether AI exists in the creative process. The question is what humans choose to do with it.

If generative systems are used to flood the internet with meaningless content, criticism is justified.

But if people use these tools to extend their thinking, experiment with new forms, or explore ideas that would otherwise remain unrealized, dismissing the work outright may mean missing the beginning of something important.

The danger of the “AI slop” panic is not just that it rejects bad content. The danger is that it may cause us to ignore genuine innovation simply because the tools involved make us uncomfortable.

Dismissing AI-assisted work outright risks blinding us to genuine innovation. We need a more nuanced conversation—one that prioritizes critical engagement with ideas, not a panicked scrutiny of tools.

The history of creativity suggests that tools rarely determine the value of an idea. People do.

And if culture spends more time investigating the tools than engaging with the ideas themselves, we may discover that the real casualty of the AI debate is not creativity—but attention.

👏🏽

👑🔥✍🏾🗣️♨️